Whether you’re new to Facebook ads or doing it for some time but still searching for the best way to structure campaigns, this post is for you.

Trying to create an effective campaign can be a pressure at times.

But in this post, we’ll help you simplify your campaigns so running ads won’t seem too daunting to think about.

We’ll help you structure:

- Conversion Campaigns

- Retargeting Campaigns

- Video Views Campaigns

- Website Traffic Campaigns

- Scaling Campaigns

We’ll also include best practices, tips on choosing the right bid strategy, and a guide to creating compelling copy for the ads.

If you’re ready to streamline your ad creation using simplified campaigns, then let’s get started.

Conversion Campaigns

Table of Contents

There is no black and white formula in creating high-converting Facebook ads. To get the best results, you have to do rigorous testing to determine who is best to target and what ads work best.

Once you get this figured out, you can use the insights for scaling campaigns. But more on that later. For now, let’s focus on conversion campaigns.

What are conversion campaigns by the way?

Conversion campaigns are used to drive traffic to lead magnets like checklists, cheat sheets, abandoned cart email templates, onboarding email templates, and webinars.

Any free opt-in you can use to encourage users to interact with your ad is a conversion campaign. These can be free trials for tools like a PDF editor.

When creating a conversion campaign we won’t use CBO or conversion budget optimization yet because a CBO will not spend equally for each ad. We want equal spending for each ad set to get an accurate insight into what audiences work best.

Inside a conversion campaign, you’ll put in an ad set, and inside an ad set, an ad.

To make things clearer about 3 these levels, here’s a quick explanation:

- The campaign is the goal you have for ad

- The ad set is the audience you’ll be targeting for the ad

- Ad is the creative itself

So in a conversion campaign, the sweet spot is putting 4-8 ad sets in it.

The goal is to separate different audiences so you can test out which one group offers the best conversions.

The ad sets can be a lookalike audience based on previous customers or leads, an interest-based group or two, and a broad interest group.

As mentioned earlier, we want equal spending across all ad sets in a conversion campaign. So let’s say you have 4 ad sets and a budget of $100 daily, split it equally so each ad set gets $25.

Now for each ad set you want to create 3-4 ads at most.

If you put in more than 4 ads, some of them won’t get any spending because Facebook will only pick at 3-4 for ads to allocate the budget into. This leaves the rest of the ads in a specific ad set untested.

You don’t want this to happen because at this stage you are still testing and you want every ad to get tested to see what converts best. Thus, you won’t know the accurate performance of each ad if you put in too much in one ad set.

If you think of it, doing this will make you lose the opportunity to make more sales since you won’t be able to know the potential of some ads.

But what if you have more than 4 ads to test?

That’s not a problem. You can simply duplicate the ad set and put 3-4 more ads to test in there.

Now, this is your first conversion campaign. You are targeting cold audiences or groups who haven’t seen or interacted with your brand yet.

You also want to create a 2nd conversion campaign. This time you put a warm audience in it.

Unlike the cold audience conversion campaign, with the warm audience conversion campaign, you only make one ad set.

In this ad set, you put in the website visitors, users who engaged on your social channels, those who subscribed to your email list, and those who watched your videos recently.

Why would you want to split the cold and warm audience?

You’d want to split it so it’s easier to track them in the dashboard. You can take a glance and you’ll know which insight group you’re looking at.

Plus, there’s a slight difference in the ads or creative you will be using for the warm audience since they already have an initial interaction with the brand.

So to recap, you’ll make 2 conversion campaigns.

For the cold audience conversion campaign, you’ll make 4 ad sets with 3-4 ads or creatives each.

For the warm audience conversion campaign, you’ll only make 1 ad set comprising all those who have an initial interaction with your brand. In it, you still put 3-4 ads.

Retargeting Campaign

Retargeting campaigns are basically used for those who are already engaged with the brand but are not hot enough to convert yet.

These audiences are those considering the brand but still looking for other options so you have to convince them that you’re the best choice to make.

The audience for a retargeting campaign tends to be very small and specific. For this, we will use the reach objective since the audience is close to the bottom of the funnel.

We will be using one ad set for each retargeting group.

Let’s say you created a campaign for learning about creating Lightroom presets for portrait photography. You can create a retargeting campaign for those who watched your webinar.

You can target them with a testimonial ad, a close-out ad about an expiring offer, or a cart close-out.

In each ad set, you can put up to 10 ads.

Why?

Because a retargeting campaign that uses the reach objective doesn’t have a problem with spend allocation like what you’d experience in a conversion campaign.

Facebook can allocate fairly across all the ads in a reach objective retargeting campaign.

So to recap, you create one ad set for a retargeting campaign. Then use 1 ad set each for each retargeting group. Then you can put as many as 10 ads per ad set.

Video Views Campaign

If you’re going to run a top-of-the-funnel ad with video views as the main objective, the goal will be for the viewers to consume the whole video so they’ll start warming up and get deeper in the funnel.

For example, for an eCommerce service like a predictive dialer, you could create a video explaining how the tool is used and what benefits it can bring.

Work with illustrators to make the content more appealing.

The structure of a video views campaign is practically similar to a cold audience conversion campaign.

Create 4-8 ad sets and in each one put 3-4 ads to ensure the best delivery across all ads.

In other cases, you want the audience to consume other types of content on the website like a blog post or a report.

You’ll still use the same structure for a cold audience conversion campaign.

Now that we’ve covered campaigns used for testing and after some time you’ll uncover the best audiences, the best creatives, and the best copy. It’s time for a scaling campaign.

Scaling Campaign

After running testing and figuring out the best audiences and creatives, you can now start with a scaling campaign.

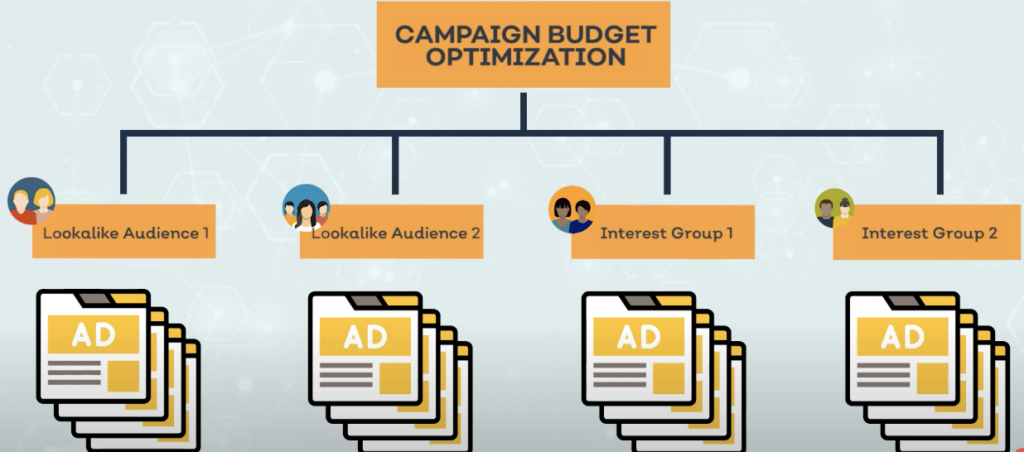

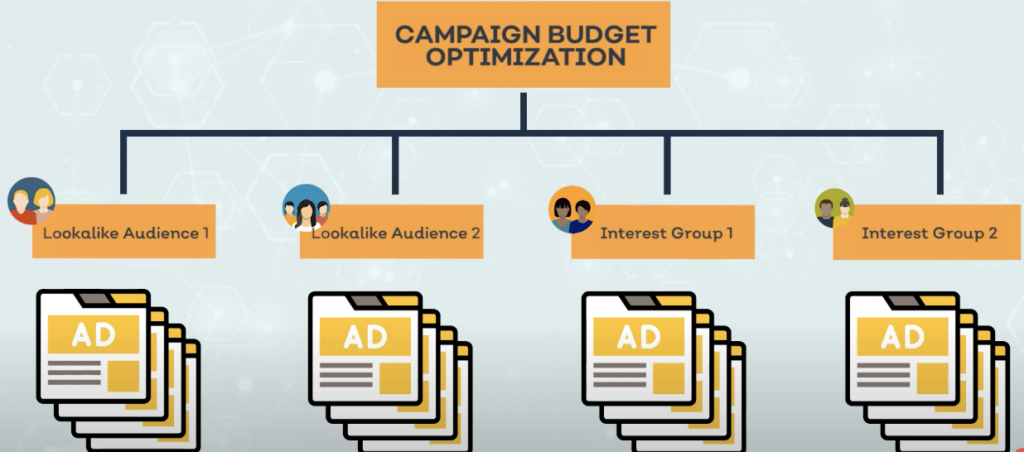

Now with the scaling campaign, you can use campaign budget optimization. At this point, you can allow Facebook to decide where and how much to allocate your budget.

Facebook will look for the best opportunities for you and it’ll focus on spending the budget there.

Create 4 ad sets which mean 4 of the best audiences you have tested. But still, for each ad set, limit yourself to 3-4 ads so you can maximize the conversions each ad gets per day.

4 ad sets with 4 ads each will let Facebook’s algorithm optimize properly so you can make the best out of your ads budget.

Your CBO budget should be equal to the total daily budget you set for the entire campaign.

Every day or two you can increase the spend by 20% until you see the conversion rate slowing down. When you reach this point you can stop with whatever budget amount you’re in.

After some time, the conversions will decline. It’s best that while you’re running scaling campaigns, you still continue to test new ads which you’ll use to replace the old ads you use in the scaling campaign.

This will help you get consistent conversions and prevent ad fatigue.

So to summarize the whole campaign process:

You start by testing audiences, creatives, and copy using conversion campaigns, retargeting campaigns, or video views campaigns.

Determine the winning audiences and creatives and use it for the scaling campaign with CBO turned on.

Simplified Facebook Ads Campaign Best Practices

For the best practices, we’ll cover 3 areas:

- Audience size

- Placement

- Events

Large Audience Size

In the testing phase, increase your target audience size so facebook can gather enough data fast.

For this you can:

- Use large groups for a lookalike audience

- Group interests and behaviors with a lot of overlap

- Increase retargeting windows

For large audiences, an audience overlap will likely happen. To minimize this, you can use exclusions like excluding past purchasers.

Utilize Automatic Placements

- Increase the ad budget to bid ratios

- Use campaign budget optimization when scaling

- Test creatives at the ad level are much more efficient. No need to create multiple ad sets for each creative

- Use Placement Asset Customization to coordinate messaging across platforms

- Bid based on the audience’s lifetime value

Optimize Ad For The Right Event

Before turning off your campaigns if you’re not happy with the performance, optimize it for an event deeper in the funnel. This extra wait will be worth the upfront cost.

For instance, if you had been optimizing for clicks, optimize for an event that’s unique when initiating a checkout like an add to cart.

Keeping these 3 in mind will be great growth hacking techniques in the Facebook ads field.

Choosing The Right Bid Strategy

To simplify bidding, here are 3 strategies you can work around with.

- Lowest Cost: This is where you let Facebook set the bid for the conversion event you’re optimizing for

- Target Cost: If you want a specific cost per result, you can set an average cost for each conversion event

- Lowest Cost With A Bid Cap: If you’re targeting a broad audience with lower chances of converting set a bid cap to manage costs

Guide In Creating A Compelling Ad Copy

Optimize and improve your ads if you’re not happy with your ad’s current performance.

A compelling ad copy will reduce the cost per lead, improve click-through rates, and of course, the overall performance.

So here are 5 elements for a compelling ad copy.

1. Start By Grabbing Attention

The best way to grab attention is to ask a powerful question where the audience will generally answer yes to.

You can use an emotion-stirring question that triggers them to make an internal answer of “yes, that’s me!”.

Doing this will also make your audience feel that you’re speaking to them directly.

How can you do this?

Here are some examples you can get ideas from:

If your audience is people in the manufacturing industry or eCommerce store owners doing the inventory themselves, you can present them with your automated inventory tool by asking: “Did you know you can stop counting your inventory?”.

Or if you’re targeting website owners to promote your user research platform, you can ask: “Is investing in user experience a waste of money?”

The main goal is to state a question that will trigger an emotional response and resonate with your audience.

One thing to keep in mind is to write like you’re part of the target audience. Use the words and phrases they use when telling them about the problem you’re going to solve for them. This will resonate with them better.

If you have no idea how they talk. You can try to jump in on a call with them or ask them to write a survey. This will give you a good idea of how you can speak like them.

To grab attention, you can also start with a polarizing or controversial statement.

For example, if you’re going to market colorful sarape blankets, a polarizing statement will be: “blankets should always be black and white”.

With a statement like this, people will either agree or disagree.

If they agree, you’ll get them emotionally motivated to continue watching your ad.

If they disagree, they’d still want to watch to prove that their opposing view is correct and that you are wrong.

Now that you’ve grabbed their interest, it’s time to build their interest.

Here’s another example from a polarizing copy from Caitlin Bacher:

2. Keep Them Interested

Build up your viewer’s interest by telling them what they can get if they finish the ad. It can be a promise of new information they’ll be learning.

If you use the question: “did you know you can stop counting your inventory”, you can follow that up by adding: “if you have a barcode system to upgrade your inventory system”.

3. Build Authority And Trust

Before deepening their desire, you first have to build authority and trust so, in their eyes, you’re a credible brand they can listen to and believe in.

This will most likely be the first time they see or hear about your brand. At this point, you’re a stranger that showed up in their Facebook stories or news free.

Always remember the question in people’s minds – why should I listen to you?

Give them a good reason and they’ll continue giving you the attention.

4. Desire

Now that they can trust you, you can now go into detail about your offer, say a migration from one tool to another like a HubSpot migration. In a few sentences, tell them about what your offer can do for them.

Paint a picture of how their business will be like or how their life will turn out if they watch your video, attend your webinar like what Meetfox offers, read your blog, or buy your product.

Always tell them what benefits them. Use “you” and not “we” or “us”. Make everything about them and you’ll drive them to the action you’ll want them to take.

5. Call To Action

Make it easy for them to know what to do next. You can get them interested but not drive them to where you want them to be if you don’t have a call to action.

It doesn’t have to be fancy, just tell them exactly what you want them to do like: “click here to read the blog” or “click here to check out your options”.

Prevent them from guessing the next action, tell them straight what they have to do.

Here’s a more in-depth guide for copywriting.

Conclusion

As an overall recap:

- Campaigns should have 4-8 ad sets with 3-4 ads each

- An exception will be the warm audience conversion campaign where you only create 1 ad set but up to 10 ads in it

- For the best practices: use a large audience for testing, automate placements, and optimize for the right event

- Create compelling copy that includes the 5 important elements

We hope you found this helpful. Now you can streamline creating ads using this simplified structure.