Brands like Macy’s and IKEA Retail are leveraging Retail Search and Recommendations AI to help consumers find what they want first and fast.

When a retailer gets product discovery right on their websites and mobile apps, more than half of consumers end up purchasing additional items once they find their target item.

getty

Imagine you walked into a hardware store and asked for a hammer and the clerk handed you three screwdrivers and a pair of pliers. Or on your next visit to a boutique, you ask for an item hanging behind the register, and they tell you they’re all sold out, and it’s making you just a little crazy, because there’s clearly five of them hanging right there.

This may all sound a bit cuckoo. But for shoppers online, it’s a constant occurrence.

Whether shopping at home or in-store, it’s a given now that everyone is doing it with a device either in front of them or by their side, ready to browse merchandise, comparison shop, even to check inventory or check out without needing help from an associate.

At least that’s the ambition. After all the upheaval and innovation of the past few years, digital and omnichannel shopping experiences still have a ways to go, especially when it comes to the ever-evolving challenges of product discovery and personalized experiences. Understanding what customers are looking for, sometimes even before they do, has always been the holy grail for retailers.

And yet even the most innovative companies are still encountering challenges. Some 94% of global consumers have abandoned a shopping session over the previous six months, according to a survey conducted by The Harris Poll and Google Cloud. The reason: they couldn’t find what they were looking for, especially through the retailers search engine, which was not returning relevant results.

When the right pieces aren’t there, the sale falls apart, and possibly even the customer relationship

Google Cloud

It’s a frustrating experience for both sides. The customer knows exactly what they want, or they’re excited to find it. And the retailer likely has it in stock, or something even better the consumer hadn’t considered—especially when the retailer can leverage deep insights to build a compelling recommendation engine and personalized offerings.

But when the right pieces aren’t there, the sale falls apart, and possibly even the customer relationship. Search quality and personalization isn’t just about transactions—it’s also about your brand, and a bad search experience can stick in the minds of shoppers. Roughly 85% of consumers in the same Harris survey viewed a brand differently after an unsuccessful search, and 74% say that they avoid websites where they didn’t have a great search experience in the past. For retailers, that means not only lost revenue but also losing customers.

Search quality and personalization isn’t just about transactions—it’s also about your brand.

Google Cloud

The good news is that the reverse is also true. When a retailer gets product discovery right on their websites, mobile apps—even in those emergent augmented-reality shopping experiments—more than half of consumers end up purchasing additional items once they find their target item, according to the Harris research.

Getting product discovery right, though, is a widely recognized challenge. A majority of website managers say abandoned searches are a known problem at their companies and are concerned about their cost. Still, nearly two out of three say that their company doesn’t yet have a clear plan to make the search experience easier for their customers. They’re looking for solutions.

Getting product discovery right is a widely recognized challenge.

Google Cloud

Why is this so hard? In retail ecommerce, the challenges of providing high-quality search functionality and personalization are centered around three key areas:

- Understanding user intent. Also sometimes called query intent. A search box is a very small place for shoppers to express themselves, and the input is very sparse for a search engine to understand the consumer’s intent.

- Context. The same search term from different users in different locations with different search histories can all have very different meanings, and aren’t easy to interpret or apply to search results.

- Product knowledge. It’s very difficult to completely describe products and services just through a long list of attributes and metadata fields.

Many retailers are making strides in these areas by tapping into the pathbreaking power of AI-enabled tools on the cloud. Two recent examples are Macy’s and IKEA, who have been innovating for years and particularly seized the moment of the pandemic to accelerate their digital transformations.

Related: Hey Google, show me the future of retail

Tackling search abandonment

Table of Contents

When the pandemic hit, Macy’s responded by closing some locations and doubling down on their digital presence. They were looking at both internal and external opportunities to further improve their search technologies to meet their customer needs and capture the increased ecommerce demand.

“We wanted to show up to the customer every single time they come to the Macy’s site with an intent, and show them the breadth of selection accurately in an engaging and fast manner,” Jilberto Soto, director of product management for search at Macy’s, recently shared. With increased traffic and a growing catalog, Macy’s needed a flexible platform that could serve both near-term and future needs.

“One principle we have here at Macy’s within search is that customers change and so do we. Understanding customer intent as they change, what they’re looking for at our sites, and making sure we show up there, even in areas we didn’t anticipate.”

After their deployment of Retail Search, Macy’s saw positive results by two important measurements: click-through rate, which demonstrated both customer engagement and search improvement; and revenue-per-visit, which showed that customers found and ordered products at the end of their visit, building trust and brand affiliation and predicting future visits.

For others considering Retail Search, Soto recommends some best practices: “It’s super important to understand: Where does your company want to go? What is your long-term strategy, and how does Retail Search support that strategy? For Macy’s, we knew that we were growing our catalog and wanted to attract even more digital customers, and we wanted to better understand how they shop within our stores.”

Soto recommends that retailers define their most important metric, build consensus with leadership around understanding that metric and its trade-offs, and then measure results based on that metric.

Looking forward, Soto underscores the importance of getting an even better understanding of the customer—a priority clarified by the pandemic. “One principle we have here at Macy’s within search is that customers change and so do we. Understanding customer intent as they change, what they’re looking for at our sites, and making sure we show up there, even in areas we didn’t anticipate.”

This includes continuing Macy’s personalization strategy and augmenting their sites to reduce the friction that customers have to enter an intent, both in search and browse. “That may be through pictures, voice, or our faceting,” Soto said. “The great thing about Macy’s is that we have a lot of customers and they all want to come to our site very differently. And we’re here for all of them.”

Hyper-relevant recommendations with AI

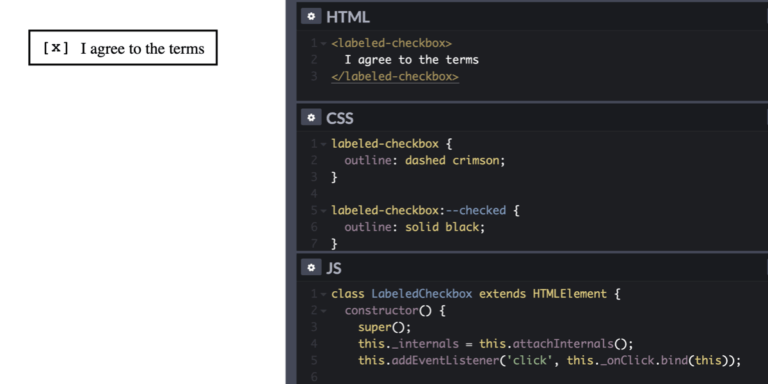

IKEA uses Recommendations AI models to help customers find products that they like quickly and establish their preferred choice among other options more quickly as well.

getty

At IKEA Retail, the shopping journey is a familiar one: multiple places, in various channels, where different kinds of personalization can deliver a superior customer experience. This includes product recommendations in the shopping basket, content recommendations in editorial sections, inspirational recommendations on product pages, and more.

The pandemic altered customer behavior and needs. At that inflection point, IKEA and its parent company Ingka Group decided to change their way of working and dive head-first into a more scientific approach to handle the operational complexities of delivering high quality product recommendations at scale. They deemed this necessary to improve the level of personalization and to have a holistic understanding of their customers.

IKEA began using Recommendations AI models like “recommended for you,” “frequently bought together,” and “others you may like.” The company coupled these features with business goals like optimizing for conversion rate, click-through rate, and revenue. This work helped customers find products that they liked quickly and establish their preferred choice among other options more quickly as well, giving them confidence to make a purchase through much fewer clicks.

Even though IKEA Retail had previously well-tuned recommendations of several types, with Recommendations AI they measured an improvement in click-through rates that exceeded 30% and saw their global average order value for ecommerce increase by more than 2%.

This success has already inspired IKEA to continue to evolve its integration with Recommendations AI, down to how it can inform the look and feel of its digital shopping experience.

Turning omnichannel into a loyalty engine

With today’s consumers increasingly expecting a seamless and personalized online shopping journey, search abandonment and personalization are real challenges facing retailers. Addressing the reasons why a shopping session is abandoned can help retailers meet their customers’ needs, boost conversions, and ensure greater customer loyalty and satisfaction.

Making sure shoppers find what they want and earning their loyalty has always been the heart of the retail industry. With cloud-enabled tools like Retail Search and Recommendations AI, retailers can keep that heart beating.

Learn more about the costs of search abandonment and customized solutions that help retailers enhance their customers’ shopping journeys. Check out Why “search abandonment” is the metric that matters.