Comparing Google and Microsoft’s Success in Capitalizing on Generative AI

Alphabet CEO Sundar Pichai is confident that Google will find a way to make money selling…

Alphabet CEO Sundar Pichai is confident that Google will find a way to make money selling…

WooCommerce is one of the most popular eCommerce plugins for WordPress and with good reason. Its…

WordPress plugins add functionality and features to your website, helping you customize it to your specific…

Google spent much of the past year hustling to build its Gemini chatbot to counter ChatGPT,…

????AnkerWork All-in-One S600 Speakphone, Up to 37% Off on Kickstarter???? https://ankerfast.club/P7SdK6⭐️ Get Qi2 charger, speaker, mic,…

Security is a massive topic in the modern world. Mental, physical, emotional, financial, cyber, we all…

The White House has issued new rules aimed at companies that manufacture synthetic DNA after years…

This week, WIRED reported that a group of prolific scammers known as the Yahoo Boys are…

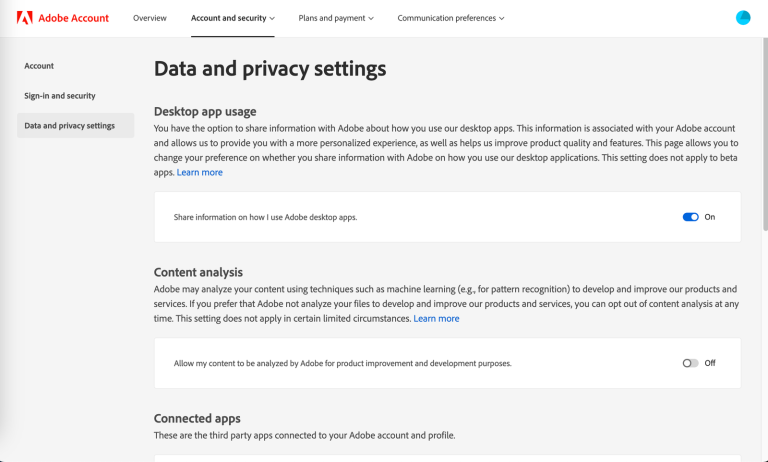

Thanks to powerful marketing, privacy is something we’ve come to immediately associate with Apple. But your…

Brian Venturo and a couple of fellow hedge fund buddies bought their first GPUs as part…

One task where AI tools have proven to be particularly superhuman is analyzing vast troves of…

Welcome back to the FastComet family album! Today, we’re meeting a hero who might not swing…

The algorithm has won. The most powerful social, video, and shopping platforms have all converged on…

Have you heard the slogan “For the community, by the community”? You may have many times,…

Jason Matheny is a delight to speak with, provided you’re up for a lengthy conversation about…

We all know how important website security is, right? We hope you said “yes” because it…

Two of the biggest deepfake pornography websites have now started blocking people trying to access them…

Every picture and video you post spills secrets about you, maybe even revealing where you live….

I was recently waiting for my nails to dry and didn’t want to smudge the paint,…

Get your Laifen Wave electronic toothbrush now! ⬇️⮕ Official website: https://bit.ly/48JBKsr⮕ Amazon ABS White: https://amzn.to/3V4OHtq⮕ Amazon…

Last October, I received an email with a hell of an opening line: “I fired a…

The other night I attended a press dinner hosted by an enterprise company called Box. Other…

Forget artificial intelligence breaking free of human control and taking over the world. A far more…

In November 2019, the US government’s National Security Commission on Artificial Intelligence (NSCAI), an influential body…

If you’ve ever posted something to the internet—a pithy tweet, a 2009 blog post, a scornful…

The time has come again to take a look at another significant WordPress release: 6.5! Right…

You answer a random call from a family member, and they breathlessly explain how there’s been…

Financial privacy has practically vanished over the last 50 years. Most people are in denial about…

We’ve all been astonished at how chatbots seem to understand the world. But what if they…

Here at FastComet, we are dedicated to continually improving our services and all their features. We…

For the past few months, Morten Blichfeldt Andersen has spent many hours scouring OpenAI’s GPT Store….

Yes, San Francisco is a nexus of artificial intelligence innovation, but it’s also one of the…

Apple and Google are reportedly in cahoots to integrate features from Google’s Gemini generative AI service…

Whether you’re a student, a journalist, or a business professional, knowing how to do high-quality research…

Ever since the rollout of ChatGPT in November 2022, many people in science, business, and media…

Welcome to the FastComet family album! Unlike dusty old photo albums, ours are bursting with life,…

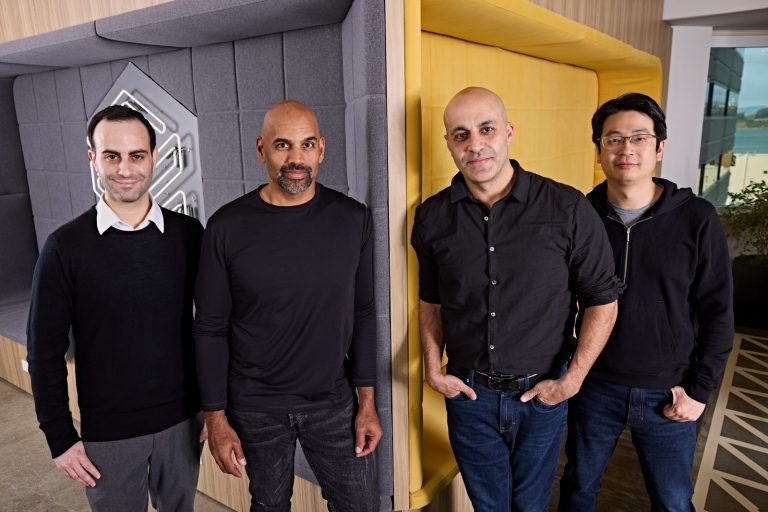

This past Monday, about a dozen engineers and executives at data science and AI company Databricks…